Our Framework for Measuring Algorithmic Performance and Potential

In Part I of our algorithmic optimization framework series, we moved away from the idea that algorithmic playlists across platforms like Spotify are “triggered” by scale or engagement. The reality is that Discover Weekly, Release Radar, and other algorithmic playlists do not activate once a track crosses some arbitrary threshold. Instead, algorithmic reach expands as the system accumulates consistent, interpretable evidence that a track performs well within specific recommendation contexts.

Once you move beyond a rigid, static view of algorithmic systems, a more practical and strategic question emerges:

If stream volumes, popularity scores, or even engagement metrics don’t fully explain algorithmic behavior, how can artists and teams meaningfully assess algorithmic performance and potential?

Over the past several years, working on streaming optimization campaigns across both major and independent catalogs, Music Tomorrow has developed an algorithmic audit framework designed to answer precisely that question. Grounded in years of R&D on Spotify and comparable recommendation systems operate, the framework focuses on deep yet measurable algorithmic signals that form the foundation of our proprietary catalog audit methodology: which audience clusters are currently associated with an artist’s recommendation profile, how much growth opportunity remains within those clusters, how algorithmic exposure converts into listener feedback, and whether the surrounding ecosystem provides fertile ground for further expansion.

Taken together, these dimensions transform opaque algorithmic performance patterns into a structured decision system — revealing where further marketing investment is likely to compound, and where the impact of additional effort will face structural limitations.

Positioning Quality: Is the Artist Recommended to the Right Audiences?

Every algorithmic stream can be traced down to identifiable audience clusters — groups of listeners united by overlapping taste, behavioral, and contextual patterns. The strategic question is therefore not simply whether you are receiving algorithmic traffic, but where that traffic comes from.

Is exposure concentrated in audience clusters that align with the artist’s creative direction and long-term goals — audiences where the music is likely to resonate and generate sustained positive feedback? Or is the artist connected to a fragmented mix of disjointed communities that are unlikely to convert into durable growth engines?

At Music Tomorrow, this dimension is captured through what we refer to as alignment. Alignment describes how closely a given algorithmic audience reflects the artist’s work, identity, and strategic priorities.

An artist may generate algorithmic streams while being anchored within spaces that do not reflect their intended scene, geography, or contextual positioning. In such cases, trends can appear positive in surface-level dashboards while concealing internal structural constraints.

Conversely, strong alignment between audience clusters and strategic priorities does not automatically guarantee scale — an artist may be well positioned yet lack sufficient authority within that niche to be consistently recommended over competitors. However, positioning fit does create the preconditions for growth: when audience alignment is strong, listeners are more likely to respond positively once the track is recommended, increasing the probability of further expansion and repeat recommendations.

From a marketing standpoint, this is where many strategies break down. Marketing campaigns that are overly broad — or disconnected from artist positioning — often generate momentary visibility without lasting impact. Tracks may briefly surface across multiple algorithmic properties, but fail to settle into a repeatable discovery pattern the system can reliably build on. And, in extreme cases, untargeted or misaligned marketing actions can even hinder the artist’s algorithmic profile and long term growth — drowning out the positive organic signals in a sea of disjointed ad-driven streams and pushing the project into an algorithmic limbo.

So, Music Tomorrow’s catalog audit begins with this structural mapping: identifying the audience clusters currently driving exposure and evaluating how well they reflect the artist’s intended positioning. Without this layer of analysis, it is nearly impossible to distinguish between temporary exposure and sustainable, strategic alignment.

Audience Coverage: How Much Room There Is To Grow?

While positioning highlights where the artist is recommended, audience coverage measures how much addressable upside remains untapped.

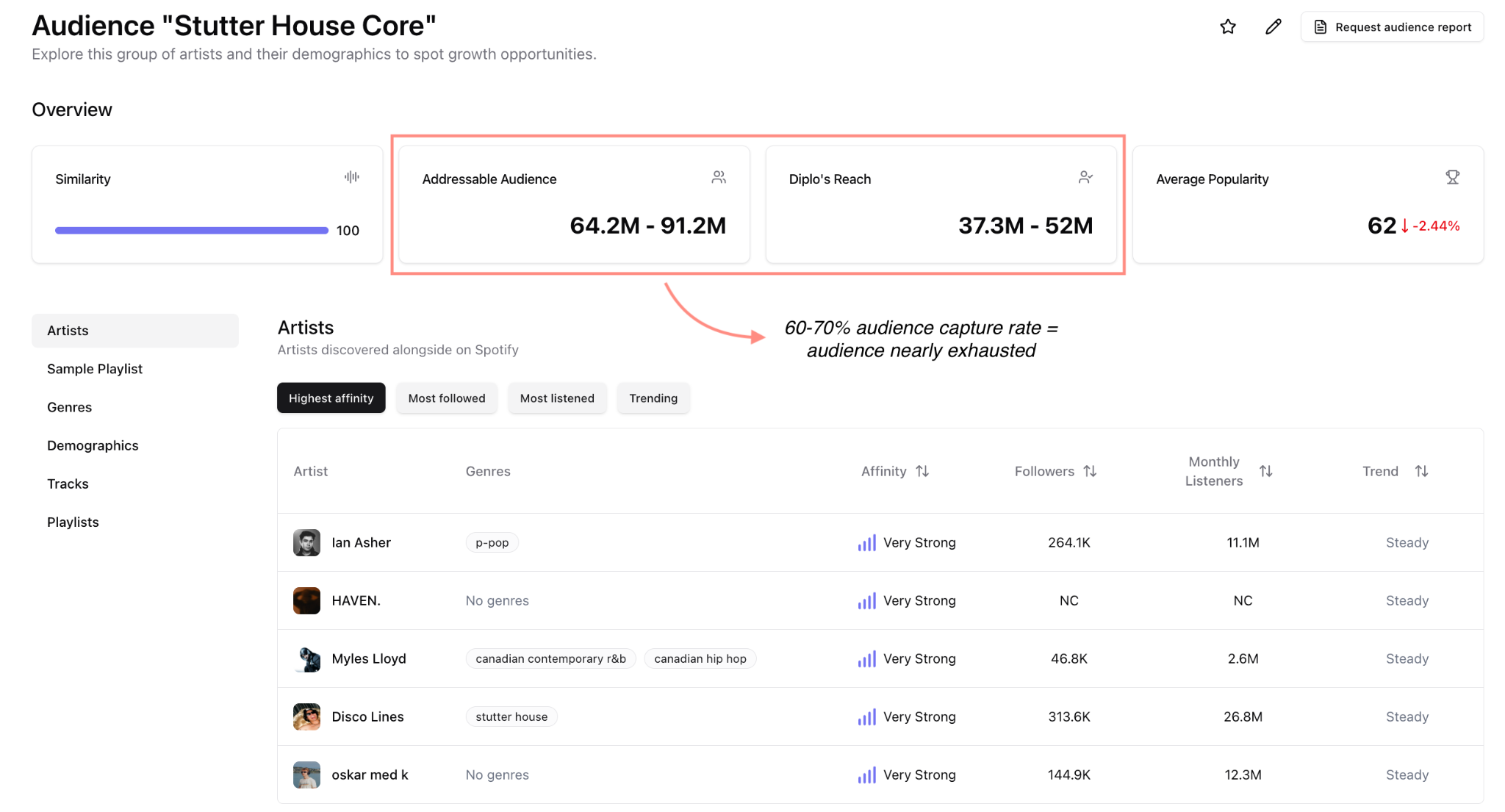

To assess this dimension, we rely on two core proprietary metrics: Addressable Audience and Algorithmic Outreach.

Each algorithmic audience represents a finite pool of reachable listeners. We define the size of that pool as the Addressable Audience — the total number of listeners that could plausibly be reached given the artist’s current positioning. Algorithmic Outreach, by contrast, measures how much of that addressable audience the system is actively attempting to reach. It functions similarly to impressions in digital marketing, reflecting exposure potential rather than realized streams.

Comparing these two metrics across audiences and artists reveals structural headroom. If outreach represents only a small fraction of the addressable audience, significant expansion potential remains within the current positioning. If most of the addressable audience has already been reached, that cluster may be approaching saturation, placing structural limits on further growth.

This distinction is critical: many label teams relying on surface-level performance metrics tend to over-invest in artists and tracks that already generate visible algorithmic streams. However, without understanding the relationship between your current exposure and the audience opportunity, it is impossible to determine whether additional marketing spend will produce incremental gains.

An artist may generate substantial algorithmic traffic while operating within a fully saturated niche — already recommended to most suitable listeners, with limited pathways for further expansion. Our audit framework quantifies both opportunity size and conversion depth, enabling teams to prioritize investment and target audiences with demonstrable, measurable growth potential.

Feedback Quality: Does Exposure Covert to Streaming Engagement?

Exposure and engagement metrics are often treated as standalone indicators of success. However, exposure alone does not drive long-term growth. Exposure that converts into strong, contextually coherent listener feedback does.

Feedback quality evaluates how effectively current algorithmic reach translates into meaningful user signals within each audience cluster. Saves, completion rates, repeat listens, downstream artist engagement, and sustained session behavior become relevant here — but only when they are interpreted within the appropriate audience context. Rather than treating engagement as a universal benchmark, we analyze outreach-to-stream conversion and audience-level engagement signals relative to opportunity.

Your strategy can’t blindly follow engagement signals alone: strong feedback generated by a small super-specialized audience may reinforce current positioning, but it does little to unlock pathways to future growth. Conversely, weaker feedback within a larger, strategically relevant cluster may still justify continued investment if structural upside remains meaningful.

This integrated perspective reveals whether positioning is merely generating traffic — or building the system’s confidence required for sustained algorithmic growth.

Scene Momentum: Is the Ecosystem Expanding?

Finally, algorithmic audiences do not exist in isolation. Each audience cluster sits within a broader, dynamic ecosystem shaped by artist adjacency, evolving genre dynamics, and shifting listener behavior.

Scene momentum evaluates whether the ecosystem anchoring the artist is trending. Are reference artists within the cluster attracting new listeners? Is the broader scene trending upward? Are there adjacent audience clusters that create natural expansion pathways for the artist’s music?

When scene-level growth is positive, structural conditions support expansion — a rising tide lifts all boats. An artist positioned within a trending streaming niche — for example, a mid-size Afrobeat/Amapiano artist — might find that even a limited audience opportunities translate into substantial streaming growth. When the niche is stagnant or declining, on the other hand, even strong audience and engagement signals may result in stabilization rather than sustained growth.

Our catalog audit framework incorporates audience growth signals alongside artist-level performance metrics to ensure that investment decisions reflect not only current exposure, but also broader ecosystem dynamics.

From Algorithmic Audit to Data-Driven Investment Strategy

Considered together, these four dimensions — positioning quality, audience coverage, feedback quality, and scene momentum — transform algorithmic optimization from reactive guesswork into grounded investment strategy.

Positioning clarifies whether the artist is reaching the right audiences. Audience coverage quantifies current impressions and reveals how much room for growth still remains. Feedback quality measures whether exposure is reinforcing expansion. Scene momentum defines the broader ecosystem trajectory and potential for future growth.

Tracks with strong positioning but limited audience coverage often benefit from reinforcement rather than expansion: deepening engagement within aligned contexts before pushing outward. Tracks that generate broad outreach but weak conversion typically require repositioning — or more precise targeting — rather than additional spend. Tracks anchored in declining scenes may simply have lower long‑term potential, regardless of short‑term visibility gains.

From a marketing standpoint, this reframing is critical. Many campaigns are optimized for immediate exposure, but exposure that is disconnected from positioning, audience opportunity, or scene momentum rarely translates into durable discovery. Sustainable algorithmic growth tends to come from fewer, more deliberate actions that align with how the system is already reasoning about the track — rather than attempts to override that logic with scale alone.

Rather than reacting to streams the system is already generating, our framework allows teams to anticipate where investments are most likely to pay off and place strategic bets on artists and tracks with strong indications of algorithmic growth potential. Music Tomorrow’s catalog audit program applies the assessment model described in this article at scale — mapping your entire catalog, ranking audience clusters by algorithmic growth potential, and delivering clear, actionable insights that integrate directly into label marketing operations.

Recommendation systems do not respond to volume alone. They respond to reinforced patterns of audience fit. The better you understand the audience clusters connected to your catalog — and the dynamics governing them — the more effectively you can allocate marketing budgets, prioritize campaigns, and build sustainable streaming growth strategies in the age of algorithmic discovery.